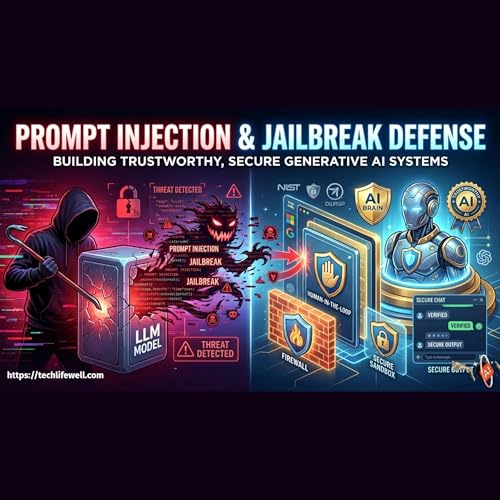

Prompt Injection & Jailbreak Defense Building Trustworthy, Secure Generative AI Systems | Artificial Intelligence

Artikel konnten nicht hinzugefügt werden

Der Titel konnte nicht zum Warenkorb hinzugefügt werden.

Der Titel konnte nicht zum Merkzettel hinzugefügt werden.

„Von Wunschzettel entfernen“ fehlgeschlagen.

„Podcast folgen“ fehlgeschlagen

„Podcast nicht mehr folgen“ fehlgeschlagen

-

Gesprochen von:

-

Von:

Über diesen Titel

Prompt injection and jailbreaks aren’t “edge cases” anymore—they’re the frontline threats shaping how we build Responsible AI. In this episode, we unpack the security reality of generative AI and large language models, and why trust must be engineered from day one.

Using guidance inspired by NIST and OWASP, we break down how prompt injection works—when malicious inputs manipulate model behavior to trigger data exfiltration, leak sensitive context, or drive unauthorized tool/actions in agentic workflows. Then we dive into real-world defenses discussed by leaders like Microsoft, Google, and OpenAI: automated red teaming, instruction hierarchies, and real-time prompt shields designed to isolate untrusted data and reduce attack surface.

You’ll learn why modern GenAI security needs a multi-layer approach: probabilistic detection paired with deterministic controls like sandboxed environments, strict permissions, and human-in-the-loop approvals for risky actions. Finally, we zoom out to the Responsible AI toolkit—continuous monitoring, transparency methods like watermarking, and collaborative bug bounty programs—to keep systems resilient as threats evolve.

If you build, deploy, or rely on LLMs, this episode is your roadmap to safer agents, stronger governance, and AI you can actually trust.

Subscribe on your preferred podcast app to stay updated: https://pod.link/1866629282

#ResponsibleAI #AIsecurity #LLMSecurity #GenAISecurity #PromptInjection #Jailbreak #OWASP #NIST #AIRiskManagement #RedTeaming #PromptShield #SecureByDesign #AgenticAI #ToolUseSecurity #DataExfiltration #Sandboxing #HumanInTheLoop #AIGovernance #BugBounty #Watermarking